دانلود رایگان مقاله تشخیص بافت پارچه با بهره گیری از احساسات بصری و لامسه

چکیده

بینایی و لامسه جزو دو حس مهم برای انسان ها بوده و اطلاعاتی مکمل برای درک و فهم محیط ارائه می نمایند. علاوه بر این، روبات ها نیز قادر به بهره مندی از چنین توانایی های سنجش چندمنظوره می باشند. در مطالعه حاضر، برای اولین بار (با توجه به اطلاعات در دسترس) به منظور شناسایی بافت پارچه با استفاده از احساسات لامسه ای و بصری، روش تلفیقی جدید تحت عنوان تجزیه و تحلیل آماری کورایانس عمیق (DMCA) با فراگیری فضای پنهان مشترک با هدف به اشتراک گذاری ویژگی ها از طریق حسگرهای بصری و لامسه، معرفی شده است. نتایج به دست آمده از این الگوریتم با بهره گیری از مجموعه داده های جدید که شامل داده های بصری و لامسه جفت شده مربوط به بافت پارچه، چنین نشان داده اند که با استفاده از چارچوب DMCA معرفی شده، عملکرد شناسایی بافت به اندازه بیش از 90% بهبود می یابد. علاوه بر این، بدین نتیجه رسیده شده است که عملکرد مشاهده شده در حسگر بصری و حسگر لامسه از طریق اجرا و پیاده سازی فضای تمثیلی مشترک، نسبت به روش فراگیری از طریق داده های یکپارچه، قادر به بهبود و اصلاح می باشند.

1. مقدمه

انسان ها دارای تجارب وسیعی از "لمس به منظور دیدن" و "دیدن به منظور حس نمودن" می باشند. به عنوان مثال، هنگامیکه فردی قصد درک و آشنایی با جسمی را دارد، به منظور "احساس" ویژگی های اصلی آن نظیر اشکال و بافت ها، ابتدا با چشمان خویش به جسم نگاه نموده و بدین ترتیب احساسات لامسه ای خویش را برآورد می نماید. چنین ویژگی های بصری پس از درک و آشنایی با جسم غیرقابل مشاهده می شوند چرا که بینایی توسط دست مستور شده و اثر خویش را از دست می دهد. در چنین وضعیتی، حس لامسه در دست به منظور کمک در "درک" ویژگی های مربوطه، توزیع می گردد. از طریق ردیابی و به اشتراک گذاری این سرنخ ها از طریق حس بینایی و لامسه، می توان اشیا (اجسام) را بهتر "درک" یا "احساس" نمود.

در مطالعه حاضر، شناسایی بافت پارچه تحت عنوان حوزه بررسی به منظور اجرا و پیاده سازی این مکانیزم به اشتراک گذاری ویژگی بین حس بینایی و لامسه در روبات ها، در نظر گرفته شده است: حس لامسه قادر به درک بافت های بسیار دقیق نظیر الگوی توزیع نخ در لباس می باشد در حالیکه حس بینایی الگوهای مشابه موجود در بافت (هرچند گاها این بافت درک شده کاملا تار می باشد) را شناسایی می نماید. علاوه بر این، عواملی که تنها در یک ویژگی (خصوصیت) وجود دارند منجر به بدتر شدن عملکرد شناسایی شده اند. به عنوان مثال، مغایرت رنگی در پارچه از طریق بینایی قابل مشاهده بوده اما از منظر حس لامسه قابل درک نمی باشد. هدف از مطالعه حاضر استخراج اطلاعات مشترک از این دو ویژگی و در عین حال حذف این عوامل، می باشد. به منظور فراگیری فضای نهان مشترک حس بینایی و لامسه، در مطالعه حاضر، چارچوبی جدید از ائتلاف عمیق مبنی بر شبکه های عصبی عمیق و تجزیه و تحلیل حداکثر واریانس، معرفی شده است. مجموعه داده ای جدید که شامل جفت داده های بصری و لامسه می باشد نیز در مطالعه، ارائه شده اند.

در مطالعات پیشین به صورت معمول به منظور تایید و تصدیق تماس ها، به جای بهره گیری از حسگر GelSight با وضوح بالا (960 در 720) از حسگرهای لمسی با وضوح پایین (به عنوان مثال، حسگر لامسه Weiss دارای 14 در 6 تاکسل) برای ثبت و ضبط بافت های دقیق که مساله ای دشوارتر از تایید وتصدیق تماس ها می باشد، استفاده نموده اند. حسگر GelSight شامل دوربینی در بخش تحتانی و قطعه ژله ای الاستومتریک پوشیده شده با غشای بازتابنده در بخش فوقانی، می باشد. الاستومر به منظور لمس شکل هندسی سطح و بافت اشیایی که در تعامل با آنها می باشد، تغییر شکل می دهد. سپس این تغییر شکل توسط دوربین در زیر اشاعه نور از لدهایی که در جهت های مختلف با هدف هدایت صفحات نوری به سمت پرده (پوسته) جایگذاری شده اند، ثبت و ضبط می گردد. علاوه بر این، بر اساس اطلاعات جمع آوری شده، پژوهش حاضر اولین مطالعه ایست که هم تصاویر لامسه ای و هم داده های بصری را برای شناسایی بافت مورد ارزیابی و بررسی قرار داده است.

2. مجموعه داده های مربوط به لباس وی تک

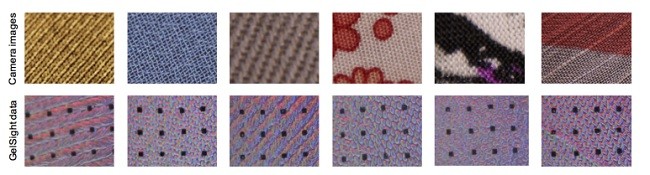

مجموعه ای از داده های بصری و لامسه ای مربوط به لباس را با بهره گیری از 100 قطعه لباس روزانه تحت عنوان مجموعه داده ای لباس وی تک، جمع آوری شده اند. البسه به کار گرفته شده از گونه های مختلف و از پارچه هایی با جنس های متنوع با بافت های مختلف، بوده اند. در مقایسه با داده های موجود که تنها شامل تصاویر بصری [2] یا برداشت های لامسه ای [3] از سطوح بافت می باشند، داده هایی که بردرگیرنده دو ویژگی نظیر بینایی و لامسه هستند، هنگامیکه پارچه به مسطح بوده، جمع آوری شده اند. در ابتدا تصاویر رنگی با استفاده از دوربین کانن T20i SLR به صورت تقریبا موازی با خود لباس همراه با چرخش های مختلف در سطح گرفته شده اند تا بدین ترتیب در مجموع بازای هر لباس، ده تصویر در دسترس باشد. در نتیجه، 1000 عکس دیجیتالی در پایگاه داده ای وی تک، موجود می باشد. داده های لامسه ای توسط حسگر GelSight جمع آوری شده اند. همانطور که در شکل 1a نیز نمایش داده شده، فردی حسگر GelSight را در دست گرفته و بر سطح پاچه لباس مد نظر در جهات معمول، فشار می دهد. همانظور که در شکل 1b نیز قابل مشاهده می باشد، با فشار حسگر بر سطح پارچه لباس، مجموعه ای از تصایر مربوط به بافت لباس توسط GelSight گرفته و ذخیره می شوند. در مجموع 96536 تصویر با بهره گیری از GelSight جمع آوری شده است. تمامی داده ها بر اساس ساختار سطحی پارچه لباس بوده و هرگونه زینت سخت موجود در سطح البسه به منظور جلوگیری از نمایان شدن در تصاویر گرفته شده توسط Gelsight یا تصاویر دیجیتالی، حذف شده است. نمونه هایی از تصاویر دیجیتالی و داده های جمع آوری شده توسط GelSight در شکل2ف نمایش داده شده است.

3. روش شناسی و نتایج

به منظور مطابقت داده های بصری و لامسه ای که به خوبی جفت نشده اند، با بهره گیری از تجزیه و تحلیل عمیق حداکثر واریانس (DMCA) ابتدا تمثیل های این دو ویژگی از طریق عبور آنها از لایه های چندگانه انباشته جداگانه تبدیل غیرخطی، محاسبه و سپس با پیاده سازی و اجرای تجزیه و تحلیل حداکثر واریانس، فضای پنهان مشترک برای این دو ویژگی به طوریکه کوواریانس بین این دو تمثیل حداکثر مقدار خویش باشد، محاسبه شده است. به منظور ارزیابی و بررسی روش DMCA معرفی شده در شناسایی بافت لباس، از مجموعه داده ای لباس وی تک استفاده شده است.

ابتدا از روش شناسایی تک نمایی کلاسیک با بهره گیری از داده های مربوط به هریک از ویژگی ها، استفاده شده است. هنگام بهره گیری از داده های مربوط به حسگر GelSight و دوربین دیجیتال برای مجموعه آموزش و آزمون، میزان دقت برآورد شده برای شناسایی بافت پارچه برابر با 83.4% یا 85.9% می باشد. این ارقام نشان دهنده، تمثیل های فراگرفته شده توسط شبکه های عمیق به کارگرفته شده برای شناسایی بافت با استفاده از هریک از ویژگی ها به صورت جداگانه، می باشند. با این حال، به ویژه در حوزه رباتیک، دستیابی به داده های مربوط به ویژگی خاص که برای آموزش به کار گرفته شده اند، امری آسان نمی باشد: داده های لامسه ای اغلب در دسترس نبوده یا جمع آوری آنها بسیار دشوار می باشد؛ علاوه بر این، دسترسی به بافت های دارای جزئیات متعلق به اجسام (اشیا) حتی از طریق دوربین های دیجیتال، امری ممکن و آسان نمی باشد. بدین منظور، به منظور آموزش مدل برای شناسایی بافت متقاطع پارچه با بهره گیری از یک ویژگی حسی در عین حال نیز از داده های ویژگی دیگر برای اجرای مدل استفاده شده است. این امر بر اساس این فرض که ویژگی های مشابه بصری بافت ها به احتمال زیاد دارای بافت های لامسه ای مشابه می باشند و برعکس، شکل گرفته است.

شایان ذکر است که روش مقطعی شناسایی بافت پارچه عملکردی بدتر نسبت به موارد انجام گرفته توسط روش تک نمایی، است. هنگام ارزیابی داده های برگرفته شده از حسگر GelSight برای آموزش با بهره گیری از مدل آموزش داده شده توسط داده های بصری، دقت به دست آمده برابر با 16.7% بوده است. از سویی دیگر، در وضعیت برعکس، دقت به دست آمده تنها برابر با 14.8% بوده است. دلایل احتمالی این امر، عواملیست که منجر به تفاوت در شکل ظاهری الگوی پارچه مشابه در دو ویژگی مختلف، می باشند. در دوربین، دید، مقیاس، چرخش، انتقال، واریانس رنگ و نور قابل مشاهده می باشند. درمورد احساس لامسه نیز، برداشت های مختلف از الگوهای لباس با توجه به میزان فشارهای مختلف اعمال شده توسط حسگر بر سطح پارچه، تغییر می نمایند. این تفاوت ها بدین معنا می باشند که امکان مناسب نبودن ویژگی های فراگرفته شده توسط یکی از روش ها برای روش دیگر، وجود دارد. به منظور استخراج ویژگی های همبسته بین حس بینایی و لامسه، و حفظ این ویژگی ها برای شناسایی بافت پارچه و در عین حال کاهش تفاوت های بین این دو روش، از روش DMCA معرفی شده به منظور دستیابی به تمثیل مشترک از بافت ها برای این دو ویژگی ها، بهره گرفته شده است.

Abstract

Vision and touch are two of the important sensing modalities for humans and they offer complementary information for sensing the environment. Robots could also benefit from such multi-modal sensing ability. In this paper, addressing for the first time (to the best of our knowledge) texture recognition from tactile images and vision, we propose a new fusion method named Deep Maximum Covariance Analysis (DMCA) to learn a joint latent space for sharing features through vision and tactile sensing. Results of the algorithm on a newly collected dataset of paired visual and tactile data relating to cloth textures show that a good recognition performance of greater than 90% can be achieved by using the proposed DMCA framework. In addition, we find that the perception performance of either vision or tactile sensing can be improved by employing the shared representation space, compared to learning from unimodal data.

I. INTRODUCTION

We humans have much experience of “touching to see” and “seeing to feel”. For instance, when we intend to grasp an object, we are likely to glimpse it first with our eyes to “feel” its key features, i.e., shapes and textures, and estimate haptic sensations. Such visual features become unobservable after the object is grasped since vision is occluded by the hand and becomes ineffective. In this case, touch sensation distributed in the hand can assist us to “see” corresponding features. By tracking and sharing these clues through vision and tactile sensing, we can “see” or “feel” the object better.

In this paper, we take cloth texture recognition as the test arena to apply this feature sharing mechanism between vision and tactile sensing in robotics: the tactile sensing can perceive very detailed texture such as yarn distribution pattern in the cloth whereas vision can capture similar texture pattern (though sometimes is quite blurry). There are also factors that only exist in one modality that may deteriorate the recognition performance. For instance, color variance of cloth is present in vision but is not demonstrated in tactile sensing. We aim to extract the shared information of both modalities while eliminating these factors. We propose a novel deep fusion framework based on deep neural networks and maximum covariance analysis to learn a joint latent space of vision and tactile sensing. A newly collected dataset of paired visual and tactile data is also introduced.

In the prior works low-resolution tactile sensors (for instance a Weiss tactile sensor of 14×6 taxels) are commonly used to confirm the contacts, instead we use a high-resolution GelSight sensor of (960×720) to capture more detailed textures which is a much harder problem than just confirming the contacts. The GelSight sensor consists of a camera at the bottom and a piece of elastometric gel coated with a reflective membrane on the top. The elastomer deforms to take the surface geometry and texture of the objects that it interacts with. The deformation is then recorded by the camera under illumination from LEDs that project from various directions through light guiding plates towards the membrane. Furthermore, to the best of the authors’ knowledge, this is the first work to explore both tactile images and vision data for texture recognition.

II. VITAC CLOTH DATASET

We have built a clothing dataset of 100 pieces of everyday clothing of both visual and tactile data, which we call the ViTac Cloth dataset. The clothing are of various types and are made of a variety of fabrics with different textures. In contrast to available datasets with only either visual images [2] or tactile readings [3] of surface textures, the data of two modalities, i.e., vision and touch, was collected while the cloth was lying flat. The color images were first taken by a Canon T2i SLR camera, keeping its image plane approximately parallel to the cloth with different in-plane rotations for a total of ten images per cloth. As a result, there are 1,000 digital camera images in the ViTac dataset. The tactile data was collected by a GelSight sensor. As illustrated in Fig. 1a, a human holds the GelSight sensor and presses it on the cloth surface in the normal direction. As the sensor presses the cloth, a sequence of GelSight images of the cloth texture is captured, as shown in Fig. 1b. In total 96,536 GelSight images were collected. All the data is based on the shell fabric of the cloth; any hard ornaments on the clothes were precluded from appearing in the view of GelSight or digital camera. Examples of digital camera images and GelSight data are shown in Fig. 2.

III. METHODOLOGY AND RESULTS

To match the weakly-paired vision and tactile data, Deep Maximum Covariance Analysis (DMCA) first computes representations of the two modalities by passing them through separate multiple stacked layers of a nonlinear transformation and then learns a joint latent space for two modalities by applying maximum covariance analysis such that the covariance between two representations as high as possible. We evaluate the proposed DMCA method on cloth texture recognition using the ViTac Cloth dataset.

We first perform the classic unimodal recognition task using data of each single modality. When we use the data from the GelSight sensor or digital camera for both training and test set, an accuracy of 83.4% or 85.9% can be achieved for the cloth texture recognition. This shows that the feature representations learned by deep networks enable texture recognition with either modality alone. However, especially for robotics, training data of a particular modality is not always easy to obtain: tactile data is neither commonly available nor easy to collect; also, detailed textures of objects are not always easy to access by digital cameras either. To this end, next we explore the cross-modal cloth texture recognition to train a model using one sensing modality while applying the model on data from the other modality. This is based on the assumption that visually similar textures are more likely to have similar tactile textures, and vice versa.

Perhaps surprisingly, the cross-modal cloth texture recognition performs much worse than the unimodal cases. When we evaluate the test data from GelSight sensor using the model trained on vision data, an accuracy of only 16.7% is achieved. It is even worse the other way around, only an accuracy of 14.8% is obtained. The probable reasons are factors that make the same cloth pattern appear different in the two modalities. In camera vision, scaling, rotation, translation, color variance and illumination are present. For tactile sensing, impressions of cloth patterns change due to different forces applied to the sensor while pressing. These differences mean that the learned features from one modality may not be appropriate for the other. To extract correlated features between vision and tactile sensing and preserve these features for cloth texture recognition while mitigating the differences between two modalities, we explore the proposed DMCA method to achieve a shared representation of textures for both modalities.

چکیده

1. مقدمه

2. مجموعه داده های مربوط به لباس وی تک

3. روش شناسی و نتایج

Abstract

I. INTRODUCTION

II. VITAC CLOTH DATASET

III. METHODOLOGY AND RESULTS

REFERENCES