دانلود رایگان مقاله مدل های انرژی end-to-end برای پلتفرم های IoT مبتنی بر رایانش مرزی

مطالب برجسته:

• برآورد مصرف انرژی کاربردهای IoT.

• مدل هزینه end-to-end انرژی به منظور تجزیه و تحلیل جریان دادهها در IoT.

• مقایسه راهحلهای edge و هسته ابر برای IoT.

چکیده

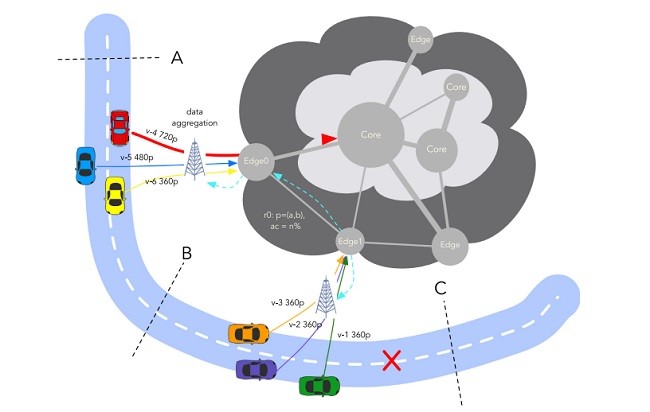

اینترنت اشیا (IoT) تعداد زیادی از دستگاههای متصل را که تاثیر مستقیم بر روی رشد دادهها و خدمات energy-hungry دارند به ارمغان میآورد. این خدمات بر زیربناهای ابر برای ذخیرهسازی و قابلیتهای محاسباتی تکیه دارند، تبدیل معماری به یک معماری توزیعشده بیشتر بر اساس امکانات edge توسط ارائهدهندگان خدمات اینترنت (ISP) ارائه شده است. با این حال، بین دستگاه IoT، ارتباطات شبکه و زیرساختهای ابر، مشخص نیست که کدام بخش مربوط به مصرف انرژی است. در این مقاله، مدل end-to-end برای سیستمهای IoT مبتنی بر Edge Cloud را ارائه میدهیم. این مدلها به یک سناریو اعمال میشوند: تجزیه و تحلیل جریان دادهها توسط دوربینهای تعبیه شده در وسایل نقلیه. اعتبارسنجی، معیارها روی تستهای واقعی را با شبیهسازی آن در شبیهسازهای شناخته شده برای مطالعه مقیاس بزرگی از دستگاههای IoT ارزیابی میکند. نتایج این سناریو نشان میدهد که، بخش لبه تعبیه شده در محاسبات منابع 3 برابر بیش از دستگاههای IoT و نقطه دسترسی بیسیم مصرف میکند.

1. مقدمه

در سال 2011، اریکسون و سیسکو اعلام کردند که تا سال 2020 به 50 میلیارد دستگاه متصل به اینترنت دسترسی پیدا خواهیم کرد [1،2]. در واقع، دستگاههای متصل با زمینههای کاربردی در حال گسترش به طور پیوسته به زندگی روزمره ما حمله میکنند: تجهیزات بهداشت شخصی، ساختمانهای هوشمند، شبکههای هوشمند، وسایل نقلیه متصل و غیره. این تعداد در سال 2016 کمتر از 20 میلیارد دستگاه بود، از جمله دستگاههای اینترنت اشیا (IoT)، گوشیهای هوشمند، تبلت و کامپیوترها [3]. پیشبینیهای فعلی حدود 30 میلیارد دستگاه را تا 2020 برآورد میکنند [3].

تمام این اشیاء، به شبکههای مخابراتی (به طور معمول اینترنت) متصل هستند و میتوانند با سایر دستگاههای متصل یا با زیرساختهای محاسباتی توزیع شده، مانند ابرها، برای مثال، برای ذخیره اطلاعات و یا انجام محاسبات ارتباط برقرار کنند. رشد تعداد اشیاء متصل شده و پشتیبانی از زیرساخت تراکم چالشهای علمی به ویژه در زمینه مدیریت مقیاسبندی، ناهمگونی شبکههای ارتباطی استفاده شده (اترنت، وایفای، 3G و غیره)، مهاجرت محاسبات بین اشیاء و زیرساختهای حمایتی و انرژی آنها را به همراه دارد.

توسعه تجهیزات IoT (اینترنت اشیاء)، محبوبیت دستگاههای تلفنهمراه و دستگاههای پوشیدنی جدید، فرصتهای جدیدی را برای برنامههای کاربردی متمرکز در محیط محاسباتی Cloud فراهم میکند [4]. از سال 2008، ایالات متحده آمریکا در شورای توافقنامه، IoT را در میان شش تکنولوژی که به احتمال زیاد بر قدرت ملی امریکا تا سال 2025 تاثیر میگذارد فهرست میکند [5]. تاثیر بالقوهیIoT بر فراگیر بودن آن متکی است: باید سیستم یکپارچهای را ایجاد کند تا تعداد بیشماری از اشیاء فیزیکی به اینترنت وصل شوند [4]. مثال اساسی از چنین اشیائی شامل وسایل نقلیه و سنسورهای متعدد آنهاست.

در میان چالشهای زیادی که توسط IoT مطرح شده است، در حال حاضر یکی از آنها توجه ویژهای را به خود جلب کرده است: ساختن منابع محاسباتی که به راحتی از اشیاء متصل برای پردازش مقدار زیادی از دادهها که از آنها خارج میشوند قابل دسترسی هستند. محاسبات ابری در گذشته برای فعال کردن تعداد زیادی از برنامههای کاربردی استفاده میشد. بنابراین میتواند بهطور طبیعی توزیع دادههای حسی، منابع جهانی و دادههای مشترک، دسترسی به دادههای از راه دور و در زمان واقعی، تامین منابع انعطافپذیر و پوسته پوسته شدن و مدلهای پرداخت را ارائه دهد [6]. با این حال، نیاز به معماری محاسبات ابری متمرکز دارد که شامل محاسبات و گرههای ذخیرهسازی نصب شده در نزدیک کاربران و سیستمهای فیزیکی است [7]. چنین معماری ابر لبهای نیاز به مقابله با انعطافپذیری، مقیاسپذیری و مسائل مربوط به حفظ حریم خصوصی دادهها برای کارآمد بودن خدمات تخلیه محاسباتی دارد[8].

درحالیکه محاسبه تخلیه به لبه میتواند از نقطه نظر کیفیت خدمات (QoS) سودمند باشد، از چشم انداز انرژی، به منابع انرژی کارآمد کمتری از مرکز داده متمرکز ابر دارد [9]. از سوی دیگر، با افزایش تعداد برنامههای در حال اجرا در ابر، ممکن است پاسخگویی به تقاضای انرژی رو به افزایش، غیر قابل قبول باشد و در حال حاضر رسیدن به این سطح نگرانکننده است [10]. گرههای لبه میتوانند برای کم کردن این انرژی از مراکز داده [9] و کاهش حرکت دادهها و ترافیک شبکه کمک کنند. بهطور خاص، زیرساخت ابر لبهای کوچکتر از مرکز داده متمرکز است بنابریان میتواند از انرژی تجدیدپذیر بهتر استفاده کند [11].

از سوی دیگر، IoT شامل میلیاردها دستگاه متصل است که عمدتا از طریق شبکههای بیسیم ارتباط برقرار میکنند، مصرف انرژی یک نگرانی عمده و محدودیت برای گسترش IoT است [12]. یک دستگاه IoT مقدار زیادی از انرژی را، معمولا از چند میلی وات تا چند وات خود مصرف نمیکند [13،14]. با این حال، تعداد روزافزون دستگاهها تأثیر ناچیزی بر زیربناهای ابر فراهم میکند که قدرت محاسباتی مورد نیاز دستگاههای IoT را ارائه میکنند [15]. برای مقابله با افزایش ترافیک ناشی از دستگاههای IoT، زیرساختهای محاسباتی ابر شروع به کشف معماریهای توزیعشده جدید کردند، به ویژه در معماری ابر لبهای، مرکز داده کوچک در لبه ابر، بهطور معمول در زیرساختهای لبهای سرویسدهنده اینترنت (ISP) واقع شده است [16،17].

درحالیکه وضعیت فعلی مطالعات متعددی را در مدلهای انرژی برای دستگاههای IoT [18،19] و زیرساختهای ابر ارائه میدهد [20،21]، بنا بهترین دانش ما، هیچ یک از آنها تصویر کلی فراهم نمیکند. بدین ترتیب محاسبه انرژی مصرفی با افزایش دستگاههای IoT در زیربناهای ابر دشوار است. مسئله اصلی، داشتن انرژی پایدار و تعيين همه وسايل و زيربناي مربوطه از جمله دستگاههای شبکه از سرورهای ISP و Cloud است. چنین نتایجی میتواند برای شناسایی بخشی که بیشترین مصرف را دارد استفاده شود و پس از آن باید بر تلاشهای انرژی کارآمد متمرکز شود.

در این مقاله، انرژی پایان به پایان را در پلتفرم IoT بررسی میکنیم. هدف ما این است که مزایای پلتفرم محاسبات لبهای را بنا به IoT پیشنهاد کنیم. بنابراین مدل انرژی پایان به پایان را برای برآورد لحظهای که محاسبات از اشیاء به لبه یا به هسته ابر منتقل میشوند، بسته به تعداد دستگاهها و QoS مورد نظر، به ویژه تعادل بین عملکرد (زمان پاسخ) و قابلیت اطمینان (دقت خدمات) ارائه میکنیم.

highlights

• Estimates the energy consumption of IoT applications.

• End-to-end energy cost model for data stream analysis in IoT.

• Comparison of edge and core cloud solutions for IoT.

Abstract

Internet of Things (IoT) is bringing an increasing number of connected devices that have a direct impact on the growth of data and energy-hungry services. These services are relying on Cloud infrastructures for storage and computing capabilities, transforming their architecture into more a distributed one based on edge facilities provided by Internet Service Providers (ISP). Yet, between the IoT device, communication network and Cloud infrastructure, it is unclear which part is the largest in terms of energy consumption. In this paper, we provide end-to-end energy models for Edge Cloud-based IoT platforms. These models are applied to a concrete scenario: data stream analysis produced by cameras embedded on vehicles. The validation combines measurements on real test-beds running the targeted application and simulations on well-known simulators for studying the scaling-up with an increasing number of IoT devices. Our results show that, for our scenario, the edge Cloud part embedding the computing resources consumes 3 times more than the IoT part comprising the IoT devices and the wireless access point.

1. Introduction

In 2011, Ericsson and Cisco started to announce that we will reach 50 billion devices connected to the Internet by 2020 [1,2]. Indeed, connected devices progressively invade our everyday lives with ever-widening application fields: personal health equipment, intelligent buildings, smart grids, connected vehicles, etc. The count in 2016 was under 20 billion of devices, including Internetof-Things (IoT) devices, smartphones, tablets and computers [3]. Current forecasts estimate approximately 30 billion devices by 2020 [3].

All these objects, linked to telecommunication networks (most commonly the Internet), can interact with other connected devices or with distributed computing infrastructures, such as Clouds, for instance, to store information or perform computations. The growth in the number of connected objects and supporting infrastructures poses scientific challenges, notably in terms of managing the scaling, the heterogeneity of the communications networks used (Ethernet, WiFi, 3G, etc.), the migration of computations between objects and supporting infrastructures, and their energy consumption.

The development of IoT (Internet of Things) equipment, the popularization of mobile devices, and emerging wearable devices bring new opportunities for context-aware applications in Cloud computing environments [4]. Since 2008, the U.S. National Intelligence Council lists the IoT among the six technologies that are most likely to impact U.S. national power by 2025 [5]. The disruptive potential impact of IoT relies on its pervasiveness: it should constitute an integrated heterogeneous system connecting an unprecedented number of physical objects to the Internet [4]. A basic example of such objects includes vehicles and their numerous sensors.

Among the many challenges raised by IoT, one is currently getting particular attention: making computing resources easily accessible from the connected objects to process the huge amount of data streaming out of them. Cloud computing has been historically used to enable a wide number of applications. It can naturally offer distributed sensory data collection, global resource and data sharing, remote and real-time data access, elastic resource provisioning and scaling, and pay-as-you-go pricing models [6]. However, it requires the extension of the classical centralized Cloud computing architecture towards a more distributed architecture that includes computing and storage nodes installed close to users and physical systems [7]. Such an edge Cloud architecture needs to deal with flexibility, scalability and data privacy issues to allow for efficient computational offloading services [8].

While computation offloading to the edge can be beneficial from a Quality of Service (QoS) point of view, from an energy perspective, it is relying on less energy-efficient resources than centralized Cloud data centers [9]. On the other hand, with the increasing number of applications moving on to the Cloud, it may become untenable to meet the increasing energy demand which is already reaching worrying levels [10]. Edge nodes could help to alleviate slightly this energy consumption as they could offload data centers from their overwhelming power load [9] and reduce data movement and network traffic. In particular, as edge Cloud infrastructures are smaller in size than centralized data center, they can make a better use of renewable energy [11].

On the other side, as IoT involves billions of connected devices mainly communicating through wireless networks, their power consumption is a major concern and limitation for the widespread of IoT [12]. An IoT device does not consume a lot of power by itself, typically from few milliWatts to few Watts [13,14]. Yet, the increasing number of devices produces a scale effect and causes also a non negligible impact on Cloud infrastructures that provide the computing power required by IoT devices to offer services [15]. To cope with the traffic increase caused by IoT devices, Cloud computing infrastructures start to explore the newly proposed distributed architectures, and in particular edge Cloud architectures where small data centers are located at the edge of the Cloud, typically in Internet Service Providers’ (ISP) edge infrastructures [16,17].

While the current state of the art offers numerous studies on energy models for IoT devices [18,19] and Cloud infrastructures [20,21], to the best of our knowledge, none of them provides the overall picture. It is thus hard to estimate the energy consumption induced by the increase of IoT devices on Cloud infrastructures for instance. The issue resides in having an end-to-end energy estimation of all the involved devices and infrastructures, including network devices from ISP and Cloud servers. Such results could also serve to identify which part consumes the most, and should then focus the energy-efficient efforts.

In this paper, we propose to investigate the end-to-end energy consumption of IoT platforms. Our aim is to evaluate, on a concrete use-case, the benefits of edge computing platforms for IoT regarding energy consumption. We propose end-to-end energy models for estimating the consumption when offloading computation from the objects to the edge or to the core Cloud, depending on the number of devices and the desired application QoS, in particular trading-off between performance (response time) and reliability (service accuracy).

چکیده

1. مقدمه

2. کارهای مرتبط

2.1 تخلیه دادهها به لبه

2.2 مصرف انرژی دستگاههای شبکه و ابر

3. use case رانندگی

4. مدل سیستم و مفروضات

4.1 قسمت IoT

4.2 قسمت شبکه

4.3. بخش ابر: مدل لبه و هسته

5. ارزیابی

5.1 مصرف دستگاههای IoT

5.2 راهاندازی برای بخش ابر و شبکه

5.3 اندازه VM و تجزیه و تحلیل زمان

5.4 مصرف انرژی لبه و هسته ابرها

5.5 دقت برنامه کاربردی

5.6 مصرف انرژی پایان دادن به پایان

6. بحث

7. نتیجهگیری

Highlights

Abstract

1. Introduction

2. Related work

2.1. Offloading data to the edge

2.2. Energy consumption of network and cloud devices

3. Driving use case

3.1. Application characteristics

3.2. Cloud infrastructure for data stream analysis

4. System model and assumptions

4.1. IoT part

4.2. Networking part

4.3. Cloud part: Edge and core model

5. Evaluation

5.1. IoT devices consumption

5.2. Setup for the cloud and networking parts

5.3. VM size and time analysis

5.4. Edge and core Clouds’ energy consumption

5.5. Application’s accuracy

5.6. End-to-end energy consumption

6. Discussion

7. Conclusion

References