دانلود رایگان مقاله رسيدن به شباهت معنایی تصوير از طريق دسته بندی حاصل از جمع سپاری

چكیده

تعیین شباهت بین تصاویر از جمله دسته بندی تصویر ،برچسب زنی تصویر و همچنین بازیابی ، مرحله اساسی در بسیاری از برنامه های كاربردی است . روش های اتوماتیك برای ارزیابی شباهت اغلب ناكارآمد است و وقتی كه زمینه معنایی برای كار نیاز است نیاز به قضاوت انسان پیش می آید چنین قضاوتهائی را می توان از طریق فنون جمع سپاری بر اساس كارهای ارائه شده توسط كاربران وب جمع آوری نمود. با این حال برای ممكن ساختن برآورد شباهت تصاویر با زمان و هزینه معقول ایجاد كارها برای مقدار انبوه باید به طریقه دقیقی انجام شود . ما مشاهده كردیم كه فواصل بین همسایگی های محلی اطلاعات ارزشمندی فراهم می كند كه به ما این امكان را می دهد كه معیارشباهت كلی را سریع و دقیق ایجاد كنیم. این ملاحظه كلیدی ما را به سمت راه حلی براساس وظائف دسته بندی ومقایسه تصاویر نسبتاً مشابه هدایت می كند درهرجستجو،اعضای گروه مجموعه كوچكی از تصاویر را درداخل صندوق های خود جمع آوری می كنند . نتایج حاكی از شباهت های نسبی زیاد بین عكس ها می باشد ، كه برای ایجاد معیار شباهت مورد استفاده قرار گرفته اند. این معیاربه صورت تصاعدی اصلاح می شود و برای ترتیب دادن تحقیقات بهتر ومكانی تر در تكرارهای بعدی بهبود می یابد . ما موثر بودن روش خودمان را بر روی مجموعه داده ها وقتی زمینه میدانی وجود داشته باشد و روی مجموعه ای از تصاویر در صورتی كه شباهتهای معنایی را نمی توان اندازه گیری كرد اثبات می كنیم . درحالت خاص نشان می دهیم روش ما گزینه بهتری از روش های مرجع است و موثر بودن تحقیقات جمع آوری و فرآیند اصلاح ما را بهبود می بخشد .

1. مقدمه

درسالهای اخیر پیشرفت های بسیاری در ضبط عكس در ابزارهای همراه حاصل شده است كه كاربران نهائی را تشویق می كند عكس های بیشتری با كیفیت بالاتر ضبط نمایند.در نتیجه این امر درمجموعه های عكس، هم در رایانه های شخصی و هم در وب سایت هائی نظیر فیسبوك،فلیكرواینستاگرام وفوردائمی وجود دارد. چنین مجموعه های بی شماری نیاز به روش هائی برای دسته بندی عكس ها ، برچسب زنی تصویر و به ویژه ذخیره عكس دارد كه به كاربران این اجازه را می دهد به سرعت عكس مناسب برای نیازشان را بیابند.این روش ها ضرورتاً متكی بر موجود بودن شباهت های دوبدو بین دو عكس در مجموعه هستند.

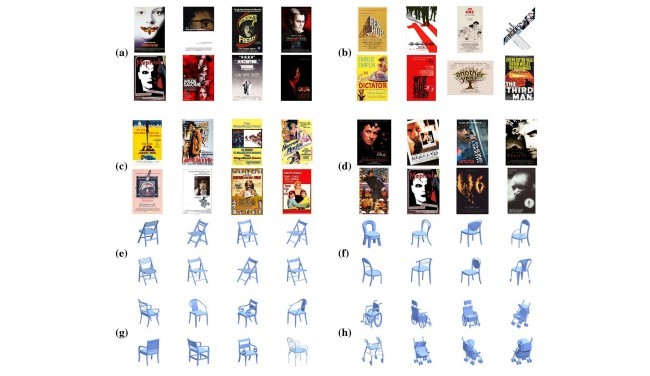

بسیار دشوار است كه یك معیار فاصله ای تعیین كنیم تا شباهت شناخت حضوری یا معنایی بین تصاویر را به خوبی ضبط كند. روش های تحلیلی برای محاسبه چنین معیار زمانی كه شباهت ها از زمینه معنایی بر می آید ناكارآمد است . این ناكارآمدی ممكن است شامل روابط فرّار نظیر هیجان و احساس مشابه كه توسط تصویرالقا می شود.(مثلاً تصایری كه ترس یا یا آرامش را انتقال می دهند)تصاویر اشیائی كه از لحاظ معنائی مرتبط هستند(مثلاً انواع مختلف وسائل باغ) ، همانندی بین افرادی كه عكسشان گرفته شده و غیره .به عنوان نمونه به شباهت بین تصاویر پوستر فیلم در شكل یك دقت كنید . شناسائی چنین شباهتهائی معمولاً توسط انسان به آسانی انجام می شود ، اما با این حال همراه باعث بروز ایراد مشكل شدید محاسباتی خواهد بود.

بنابر این راه حل علمی جمع آوری اطلاعات درباره شباهت های معنائی بین تصاویر افراد است ، برای مثال استفاده از تكنیك جمع سپاری .این روند در كار اخیر برای جمع آوری معیارهای نوع شباهت اثر بخش بوده است. [9,13]. كارنوعی مقایسه كه گروه انجام می دهد از فرم های زیرتبعیت می كند: سه تصویرA ، B و C داده شده است ، انتخاب كنید كه A بیشتر شبیه B است تا C ( پرسش سه گانه) . با فرض پاسخهای درست پرسش ، پرسش درباره هرتصویر ، پرسش سه گانه نتیجه معیار شباهت نسبی در كل مجموعه تصاویر را در پی خواهد داشت . با این حال تعداد سه گانه ها (پرسش های سه گانه) بیش از حد زیاد است . بنابراین تنها یك نمونه از تصاویر سه گانه مورد پرسش قرار گرفت و بقیه بر اساس حالت های تصویر برگزیده برآورد گردید. [9,13].

چالش دیگری در این جنبه آن بود كه افراد اغلب نیاز به] دانستن[ شرایط دارند تا بتوانند كارهارا انجام دهند . [9,13].برای مثال به سه گانه در شكل 2 توجه كنید. آیا تصویر تصویر پلی در لندن (b) از زاویه ای متفاوت به پلی دیگری در لندن از زاویه دیگر (c) شبیه است یا به تصویری از پلی در پاریس با همان زاویه تصویر a ؟ در زمینه وسیع تر اغلب واضح تر می شود كه كدام گزینه منطقی است ، مثلاً در زمینه شكل 3 تصویر (b) بیشتر شبیه به تصویر (c) است تا (a) .

در این كار ما روند جایگزینی برای یادگیری شباهتهای تصویر براساس دسته بندی پرسش ها ی ارائه شده به افراد را پیش رو قرار داده ایم . به جای پرسش ها در مورد سه تصویر ، به افراد گروه مجموعه كوچی از تصاویر داده شد و خواسته شد تا آنها را با استفاده از رابط كاربری گرافیكی در داخل صندوق های تصاویر مشابه كشیده و رها (دراگ اند دراپ) كنند ( شكل 4 را ببینید) . در حالی كه یك كار مجزای دسته بندی نیاز به تلاش بیشتری نسبت به مقایسه سه تصویر نیاز دارد روش ما دونتیجه مهم به همراه داشت . اول ، نتایج یك كار دسته بندی منفرد اطلاعات مهمی در دسترس قرار می دهد كه معادل نتایج كارهای زیادتری نسبت به مقایسه سه گانه است : تصاویر قرار گرفته در یك صندوق روی هم رفته به همدیگر شبیه تر هستند تا تصاویر سایر صندوق ها . دوم ، هرپرسشی برای گروه زمینه دیگری ایجاد می كند كه آنها را قادرمی سازد مقایسه معنادار و دارای اعتباری انجام دهند.

مشاهده كلیدی این كار آن است كه معیار شباهت را می توان به صورت اثر بخش تری از تصاویر شبیه به هم نسبت به تصاویری كه شباهتی به هم ندارند تدوین كرد. این به ویژه در زمینه شباهتهای معنایی و در صورتی كه شباهتهای مكانی بیشتر معنادار است حقیقت دارد. با پیگیری این مشاهدات ، ما الگوریتم توافقی جدیدی ایجاد كردیم كه منظور از آن تدوین پرسشنامه هائی است كه حتی الامكان از یك محل باشند . در اینجا چالش این است كه شبهات ها از قبل معلوم نیستند . بنابر این الگوریتم ما به صورت تكراری كار می كند. در هر مرحله مابرای گروه پرسش ایجاد كرده و ارائه می دهیم.بعد ازجمع آوری اطلاعات به تدریج پرسش ها را اصلاح می كنیم تا روی تصاویر مشابه در همسایگی های نزدیكتر محلی تمركز كنیم . مقایسات محلی شباهت در فضای اقلیدسی جای داده می شوند تا برآورد اصلاح شده برای معیار كلی بدست آید. سپس این معیار اصلاح شده در مرحله بعدی برای محاسبه پرسش نامه هائی كه بیشتر روی مكان تاكید دارند به عنوان ابزار مورد استفاده قرار می گیرند. این روش پیش رونده برآورد شباهت معنادار را همگرا می سازد.

ارزیابی و بررسی آزمایشی برای سنجش كارآمد بودن روش ما تكنیك را هم با داده های ساختگی و داده های واقعی گروه در سیستم نمونه به كار بستیم . اول ما تكنیك خود را بر روی دو مجموعه داده از تصاویر در حالتی كه معلوم بود آزمودیم ، نتایج را آزمایش كردیم و با روش پایه كه از تعداد مساوی پرسش نامه با محل واقعی معلوم استفاده می كرد اما به صورت تصادفی انتخاب شده بودند مقایسه كردیم . دوم ، ما تصاویر k-NN را برای مجموعه داده های تصاویر زمینه میدانی درحالتی كه محل واقعی معلوم نبودونتایج را به صورت دستی ارزیابی كردیم . در آخر ما اثر پارامترهائی نظیر تعداد مراحل و پرسش نامه ها را در مجموعه آزمایشات ساختگی بررسی كردیم .نتایج آزمایشی ما كارآمدی روش ما را برای محاسبه شباهت معنایی تصویر كه منحصراً برا اساس پاسخ های گروه است را ، بهبود می بخشد و این در حالی است كه از تعداد كمی از پرسشنامه دسته بندی استفاده شده است .

Abstract

Determining the similarity between images is a fundamental step in many applications, such as image categorization, image labeling and image retrieval. Automatic methods for similarity estimation often fall short when semantic context is required for the task, raising the need for human judgment. Such judgments can be collected via crowdsourcing techniques, based on tasks posed to web users. However, to allow the estimation of image similarities in reasonable time and cost, the generation of tasks to the crowd must be done in a careful manner. We observe that distances within local neighborhoods provide valuable information that allows a quick and accurate construction of the global similarity metric. This key observation leads to a solution based on clustering tasks, comparing relatively similar images. In each query, crowd members cluster a small set of images into bins. The results yield many relative similarities between images, which are used to construct a global image similarity metric. This metric is progressively refined, and serves to generate finer, more local queries in subsequent iterations.We demonstrate the effectiveness of our method on datasets where ground truth is available, and on a collection of images where semantic similarities cannot be quantified. In particular, we show that our method outperforms alternative baseline approaches, and prove the usefulness of clustering queries, and of our progressive refinement process.

1 Introduction

In recent years, there have been many advances in image capturing capabilities of mobile devices, encouraging end users to capture more images of higher quality. As a result, there is an abundance of constantly growing collections of images, both on personal computers and on websites such as Facebook, Flickr and Instagram. Such vast collections require efficient methods for image categorization, imagelabeling, and in particular image retrieval, which allows users to quickly locate an image suitable for their needs. These methods necessarily rely on the availability of pairwise similarities between images in the collection.

It is extremely hard to define a distance metric that would capture well the intuitive or semantic similarity between images. State-of-the-art analytical methods for computing such a metric fall short when similarities are derived from a broad semantic context. These may include elusive relations such as a similar emotion or sensation evoked by the images (e.g., images that convey “fear” or “comfort”); images of things which are semantically related (e.g., different types of garden furniture); likeness between the photographed people; and so on. Consider, for instance, the similarity between the movie posters in Fig. 1. Identifying such similarities is usually easily done by a human observer, but pose a hard computational problem nonetheless.

The natural solution is thus gathering information about semantic similarities between images from people, for example using a crowdsourcing technique.1 This approach was taken in recent work [9,13] to collect style similarity measures. The typical comparison task that the crowd performs is of the following form: given three images A, B, and C, choose whether A is more similar to B or to C (a triplet query). Assuming consistent query responses, querying every image triplet yields the full relative similarity metric over the set of images. However, the number of triplets is prohibitively large. Thus, typically only a sample of the triplets are queried and the rest are estimated based on extracted image features [9,13].

Another challenge in this respect is that people often need context to perform comparison tasks. For example, consider the triplet in Fig. 2. Is the image of a bridge in London (b) more similar to another image of a different bridge in London from a different angle (c) or to an image of a Parisian bridge from the same angle (a)? In a larger context, it often becomes clearer which option is more reasonable, e.g, in the context of Fig. 3 image (b) is more similar to (c) than (a).

In this work, we propose an alternative approach for learning image similarities based on clustering queries posed to the crowd. Instead of queries of three images, crowd members are given a small set of images and are asked to cluster them into bins of similar images using a drag-and-drop graphical UI (see Fig. 4). While a single clustering task requires more effort than comparing three images, our approach has two important advantages. First, the results of a single clustering task provide a great deal of information that is equivalent to many triplet comparison tasks: images placed in the same bin are considered closer to one another than to images in other bins. Second, each query provides crowd members with additional context that assists them in performing a more faithful and meaningful comparison.

A key observation of this work is that a similarity metric can be constructed more efficiently by performing comparisons on similar images rather than non-similar ones. This is true in particular in the context of semantic similarities, where local similarities are often more meaningful. Following this observation, we develop a novel, adaptive algorithm that aims to generate queries that are as local as possible. The challenge here is that similarities are unknown in advance. Thus, our algorithm works iteratively. At each phase, we generate and pose clustering queries to the crowd. As information is collected, we progressively refine the queries to focus on similar images in a narrower local neighborhood. Local similarity comparisons are embedded in Euclidian space to obtain a refined estimation for the global similarity metric. This refined metric is then leveraged for computing more locally focused queries in the next phase. This progressive method efficiently converges to a meaningful similarity estimate.

Evaluation and experimental study To test the efficiency of our approach, we implement our technique in a prototype system, and use it to conduct a thorough experimental study, with both synthetic and real crowd data. First, we test our technique over two image datasets where the ground truth is known, examine the results and compare them to a baseline approach that uses the same number of queries but chooses them randomly. Second, we compute the k-NN images for real-world image datasets, where the ground truth is unknown, and evaluate the results manually. Last, we study the effect of parameters such as the number of phases and queries in a series of synthetic experiments. Our experimental results prove the efficiency of our approach for computing semantic image similarity based solely on the answers of the crowd, while using a relatively small number of clustering queries.

چكيده

1. مقدمه

2. كار مرتبط

3. الگوريتم

4. آزمايشات

4.1 آزمايشات گروه با زمينه ميدانی

4.2 آزمايشات گروه با مجموعه داده های دنيای واقعی

4.3 آزمايشات ساختگی

5 نتيجه گيری

منابع

Abstract

1 Introduction

2 Related work

3 Algorithm

4 Experiments

4.1 Crowd experiments with ground truth

4.2 Crowd experiments with real-world datasets

4.3 Synthetic experiments

5 Conclusion

References